The Cyber AI Transformation Model

AI attackers are moving from manual work to agent systems. Here is a practical model for moving your security from human review to machine-speed defense.

Anthropic just went from a $9B to $40B run rate in a few months. That is faster than Google, Facebook or Amazon grew in their early years. The obvious takeaway is that AI is compressing years of work into weeks. Every company feels that now. But the security takeaway is scarier.

Here is my hot take: Cloud transformation was about not falling behind your competitors. You had years to figure it out. AI transformation in cybersecurity is about not falling behind your attackers. And you do not have years.

If attackers get 10x faster and your team still runs on tickets, reviews, manual checks and weekly meetings, the math breaks. The penalty for moving slowly is not some vague “digital transformation” miss. It is a breach.

We built maturity models to help companies move to the cloud. We do not have the same map for AI in cybersecurity yet. That is what this post is about: a Cyber AI Transformation Model. A simple way to see where your security org is today and where it needs to be next quarter, not five years from now.

From Workshops to Factories: We Have Seen This Before

AI is not the first time machines changed how work gets done. The pattern is over 250 years old. I wrote about this in my Claude Mythos piece, but the short version is that the AI revolution looks a lot like the Industrial Revolution. It is moving through the same basic phases.

Phase 1: The Artisan Workshop. Before the 1760s, one craftsperson used hand tools to turn raw material into finished goods. The tool helped the human, but the human made every decision and every cut. Output was capped by one person’s skill, time and energy.

Phase 2: The Shaft-Centered Factory. Early factories were built around the power source, not the work. Workers and machines clustered around a waterwheel or steam engine, tied together by shafts and leather belts. Output jumped as machines took over repetitive tasks, but the whole system still depended on where the power lived.

Phase 3: The Assembly Line. Ford’s real breakthrough was not the car. It was the system behind the car. He broke production into coordinated steps and arranged the factory around the thing being produced. Work no longer meant one person building the whole thing. It meant a system of specialized stations moving in rhythm.

Phase 4: The Lights-Out Factory. Modern factories can run with almost no people on the floor. Robots coordinate with robots. The system monitors itself, corrects itself and keeps moving. Humans design the factory, set the rules and step in when something falls outside the system.

Each phase builds on the one before it. That is the part that matters for cybersecurity: AI will not jump straight to autonomy. It will compound its way there.

The Four Levels of AI Transformation

These four levels were heavily inspired by Notion’s recent AI Transformation Model, which I’d encourage you to read. The Industrial Revolution maps to it almost perfectly. The key idea is simple: the levels compound.

Level 1: AI as a Thought Partner. This is the artisan workshop. You ask ChatGPT or Claude to explain a regex pattern, review a design or debug a test. It has access to your repo, docs or logs. But it mostly sees the artifact in front of it. It does not know which incident process applies, what risks your security team cares about or how work actually gets approved. It can think with you, but you still carry the company context. To move from Level 1 to Level 2, you need context engineering: connecting AI to the operating context around the work, not just the files.

Level 2: AI as an Assistant. This is the shaft-centered factory. The AI now understands more of the company map: ownership, past incidents, architecture history, approval paths and risk taxonomy. You ask why a test is flaky and it does not just inspect the code. It knows that this service has strict change controls because of a past incident and flags the right owner before suggesting a fix. The AI stops giving answers that are only technically correct and starts giving answers that fit how your company operates. To move from Level 2 to Level 3, you need workflow design and trust: breaking real work into steps the agent can execute with clear review points and escalation paths.

Level 3: AI as a Teammate. This is the assembly line. Claude Code takes a GitHub issue, writes the code, runs the tests and opens a PR for review. You do not prompt it line by line. The agent gets triggered by an incident, works through the process and handles repetitive execution. You handle the judgment calls. To move from Level 3 to Level 4, you need orchestration: specialized agents sharing state, handing off work and escalating decisions with clear guardrails.

Level 4: AI as the System. This is the lights-out factory. A primary coding agent receives a feature spec, breaks it into sub-tasks, spins up specialized agents for frontend, backend and tests, coordinates their work, runs CI and flags only the decisions that need human judgment. The developer’s role shifts from writing every line of code to designing and tuning the system that writes the code.

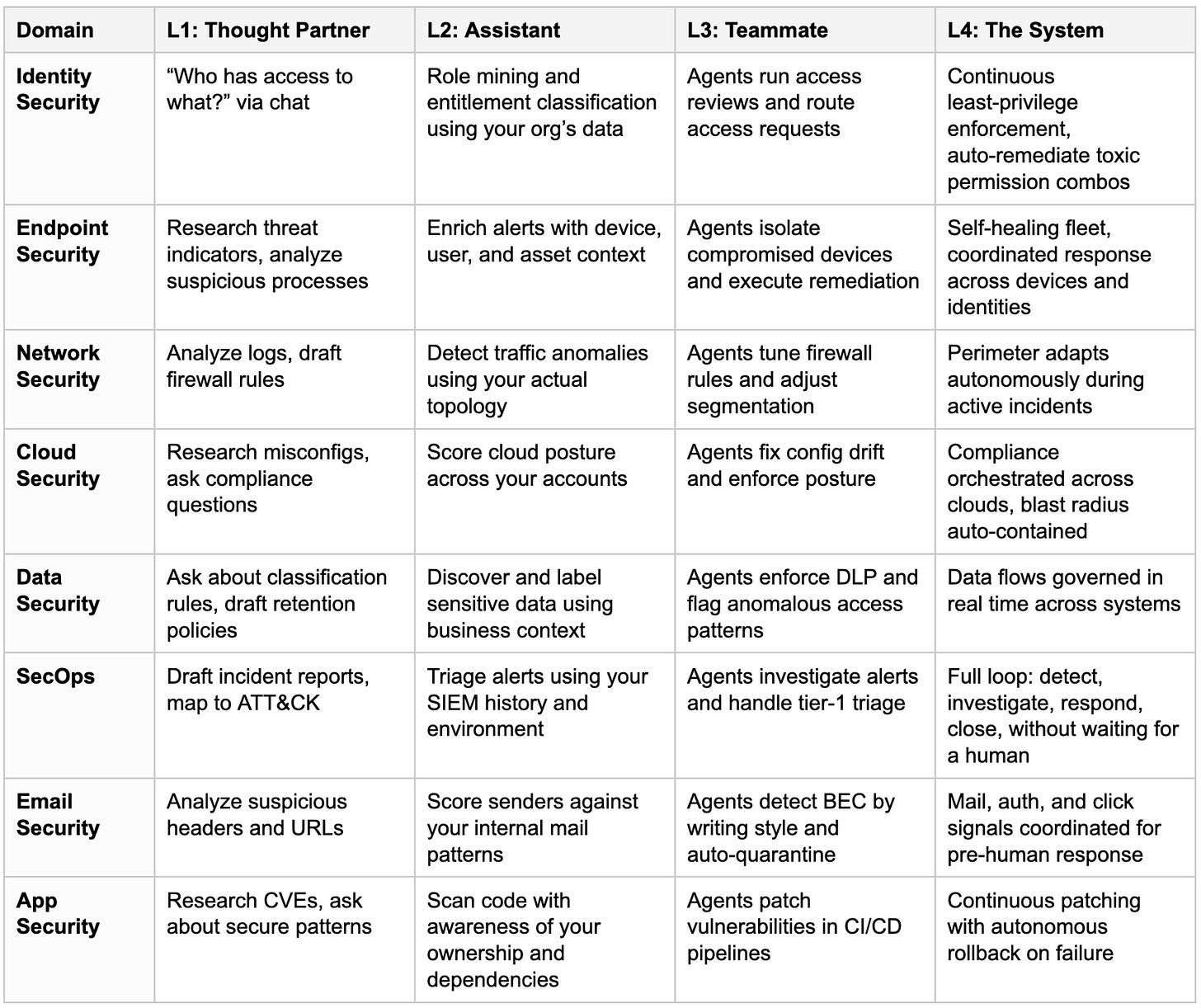

The Cyber AI Transformation Matrix

Maturity will not move evenly across security. That is expected. Identity might be at Level 3 while network security is still at Level 1. Treat this as a domain-by-domain assessment, then decide where machine-speed defense matters most.

The goal is not to move every domain evenly. The goal is to choose where Level 4 matters most. In my view, identity should sit at the top because most major breaches start with a compromised account and access volume has outgrown human review.

Case Study: Identity Security Transformation with Lumos

Identity is where human review breaks down fastest. Too many users, permissions, exceptions and decisions live in people’s heads. That is why the AI transformation model maps so well to identity.

Here is a real example from a Fortune 100 company using Lumos. Founded in the early 1900s, the company had more than a century of access debt. Thousands of users held hundreds of thousands of permissions. Figuring out what was appropriate, stale or dangerous had become humanly impossible.

Their IAM leader owned the problem with CISO sponsorship. Here is how they moved through the levels.

Level 1: Albus Chat as Thought Partner. They started with Lumos’s Albus Chat to understand the shape of the problem. Albus is the AI engine that powers Lumos. With thousands of human and non-human identities in scope, the first job was not remediation. It was comprehension. How big is the problem? Where are the riskiest gaps? Which permissions are most common? Which identities look unusual? AI helped them see an access landscape that had become too large for any team to hold in its head.

Level 2: Context Engineering with the Knowledge Hub. Next, they connected Albus to their environment through the Lumos Knowledge Hub. They uploaded their internal authentication policies and privilege classification taxonomy as context. The AI went from answering generic questions to surfacing findings that matched how the company operated. Not “this permission exists,” but “this credential violates your policy” and “this identity has write privileges it does not need or use.” Context turned the AI from a chatbot into an analyst.

Level 3: Agentic Governance. The next phase on their roadmap is Agentic Access Reviews with Lumos. This is where AI moves from helping humans understand access to helping them govern it. Today, managers get asked to review hundreds of access items and most rubberstamp because they cannot inspect each permission with enough context. Lumos Agents ingest the permission data, flag risky or unusual access, route decisions to the right owners and take action when the evidence is clear. Humans stay in the loop, but they no longer carry the whole review by hand.

Level 4: Identity Agents Running Continuously. The final stage moves from periodic reviews to continuous protection. Lumos Identity Agents run on a scheduled or triggered basis and remediate threats as they appear. In this environment, agents detected a non-human identity with a toxic privilege combination, suspended it and created a ticket. No waiting for the next quarterly review. The factory now runs around the clock.

Building Your Own Factory

The defenders who win will be the ones who build their own agent factories. They will connect their tools into one coordinated system that operates at machine speed.

Use this model to have an honest conversation with your leadership team. Map where each domain sits today. Identify which areas are still at Level 1 and which are ready for Level 3.

The CEO and board need to champion it. The CISO and CIO need to fund it. VP and Director-level leaders need to execute it. This model gives everyone shared language for where you are and where you are going. More importantly, it gives you permission to move in quarters, not years.

The threat will not wait. Neither should you.

If you want the full Cyber AI Transformation Matrix with detailed breakdowns for each domain, you can download the PDF and graphics here: [link]

If you want to chat about the matrix, send me a note at andrej@lumos.com or message me on LinkedIn.

With positive vibes,

Andrej